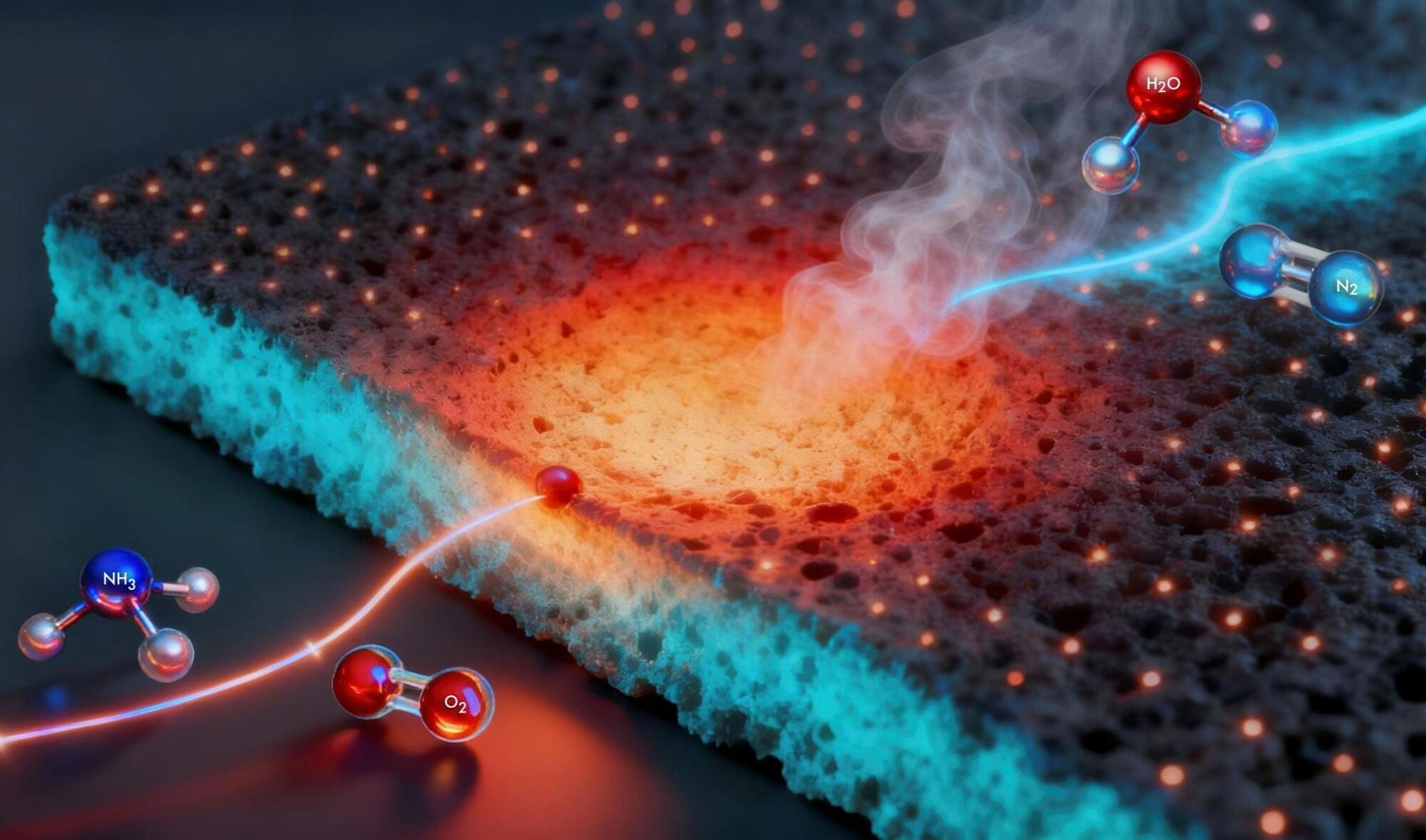

Ultrafast lasers emit pulses lasting only a few hundred femtoseconds (quadrillionths of a second). These flashes of light power applications from precision micromachining to eye surgery to optical frequency combs, the Nobel Prize-winning technology behind today’s most precise optical atomic clocks. Yet despite more than two decades of effort, ultrafast lasers have largely remained bulky, expensive systems confined to optical tables.

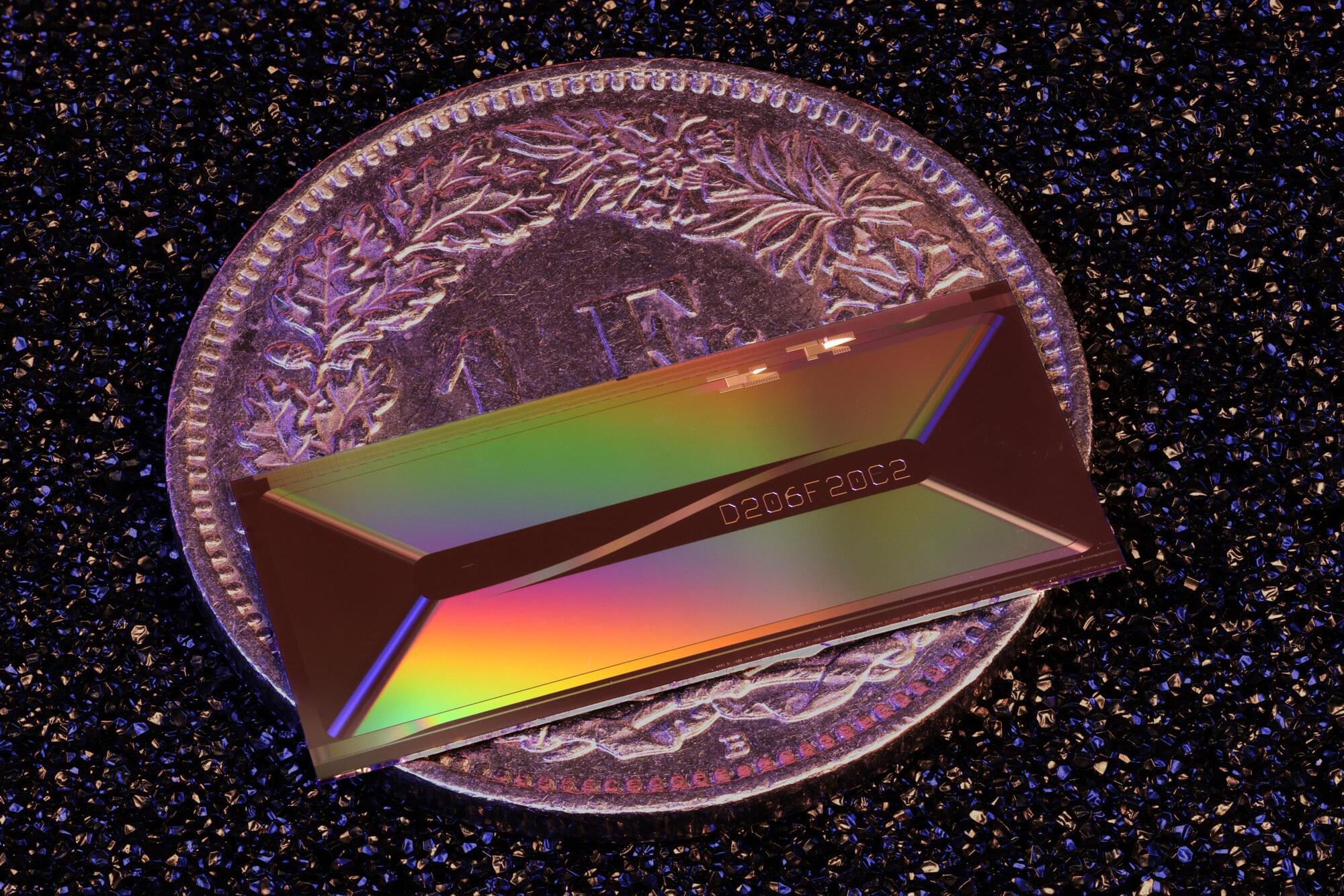

Now a team led by Professor Tobias J. Kippenberg at EPFL has brought them onto a photonic chip. Publishing in Nature, the researchers report the first integrated ultrafast laser to rival tabletop femtosecond lasers, delivering 1.05 nanojoules in pulses as short as 147 femtoseconds.

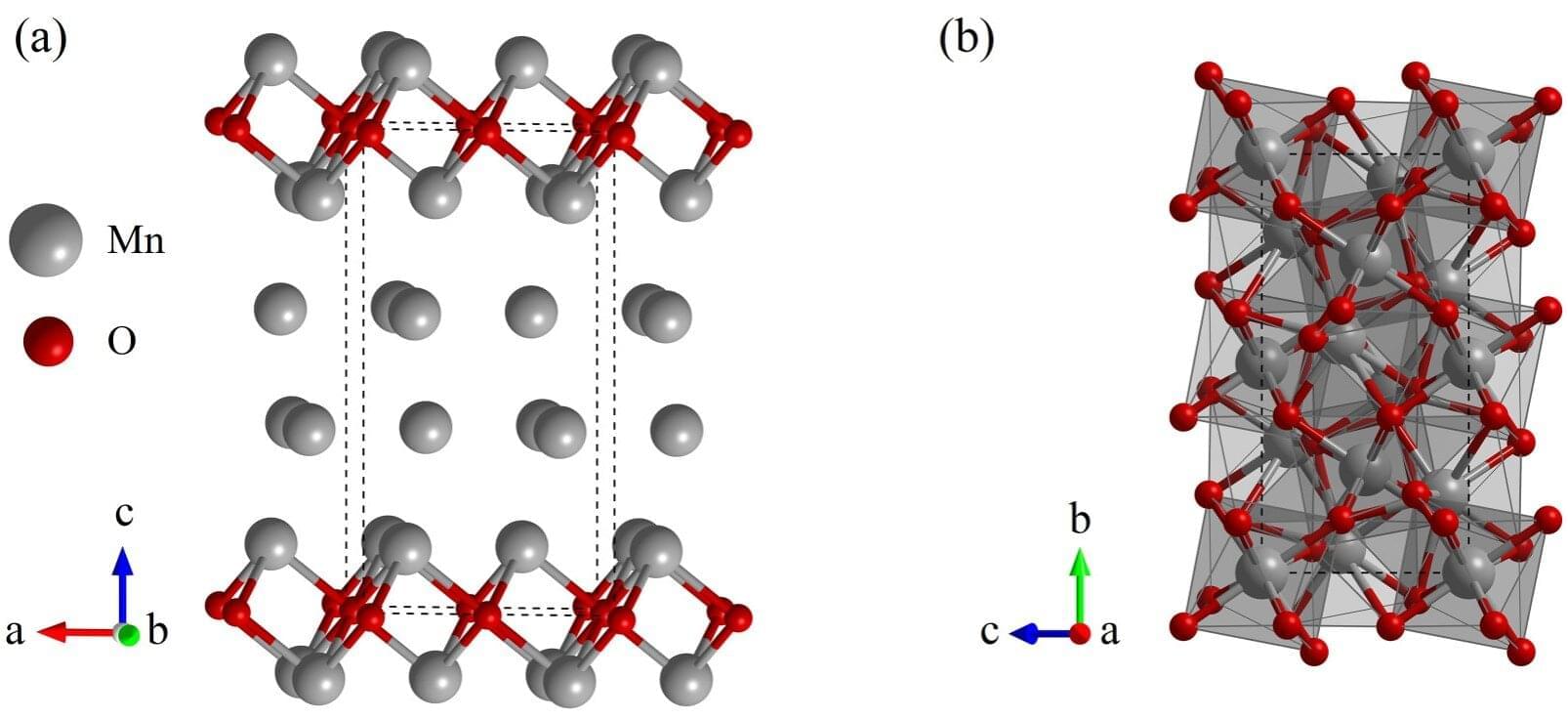

Photonic chips guide and process light in microscopic channels called waveguides patterned on a wafer, similar to how electronic microchips route electricity. Already widely used in telecommunications, photonic chips have miniaturized complex functions that once required much larger systems.