Are two sets of data genuinely different, or is it because of randomness? This question, known as the two-sample testing problem, becomes notoriously difficult in modern datasets, because they are often high-dimensional, complex, and differences between them can take countless subtle forms.

“Simply put, we don’t know what differences to look for, the possibilities are bewildering,” says Professor Victor Panaretos at EPFL’s Institute of Mathematics.

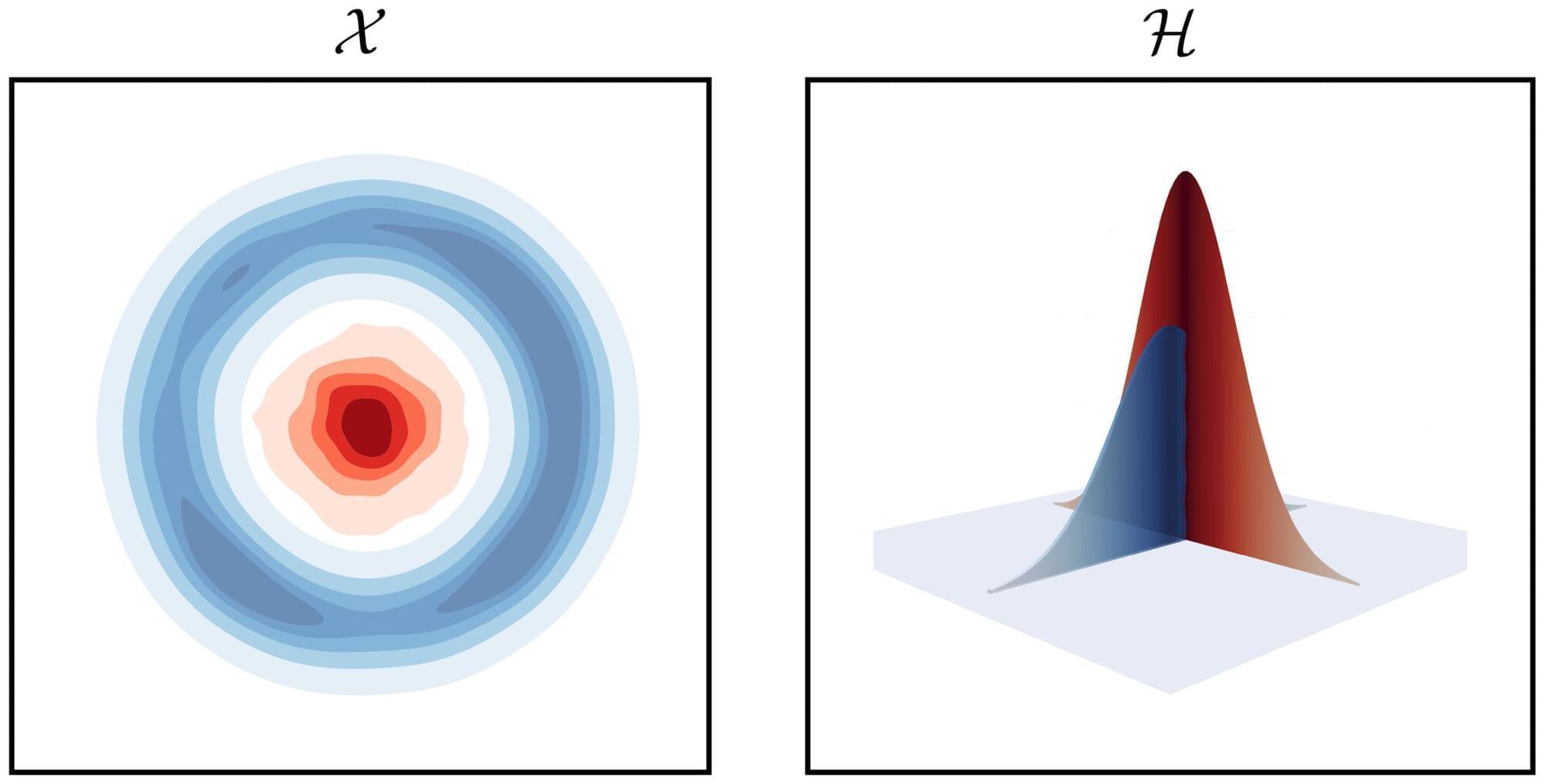

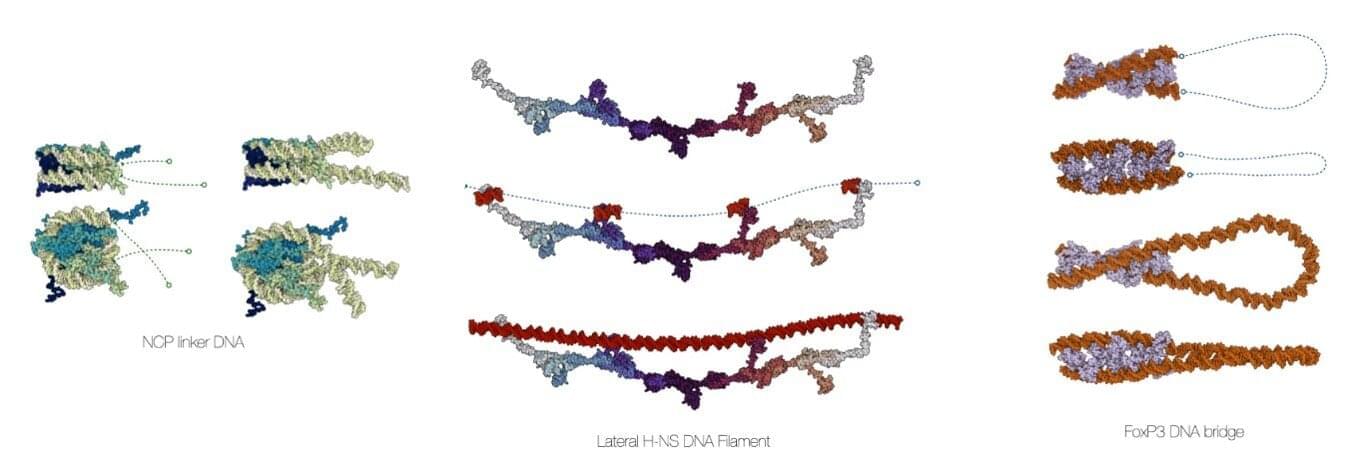

To solve the problem, mathematicians have developed the so-called “kernel methods,” which have emerged as powerful solutions, widely used in fields such as genomics, finance, and artificial intelligence.