As artificial intelligence systems grow larger and more powerful, their energy demands are rising dramatically. But recent research from the University of Massachusetts Amherst published in Nature Communications suggests that advanced AI capabilities may be achievable with dramatically lower energy consumption.

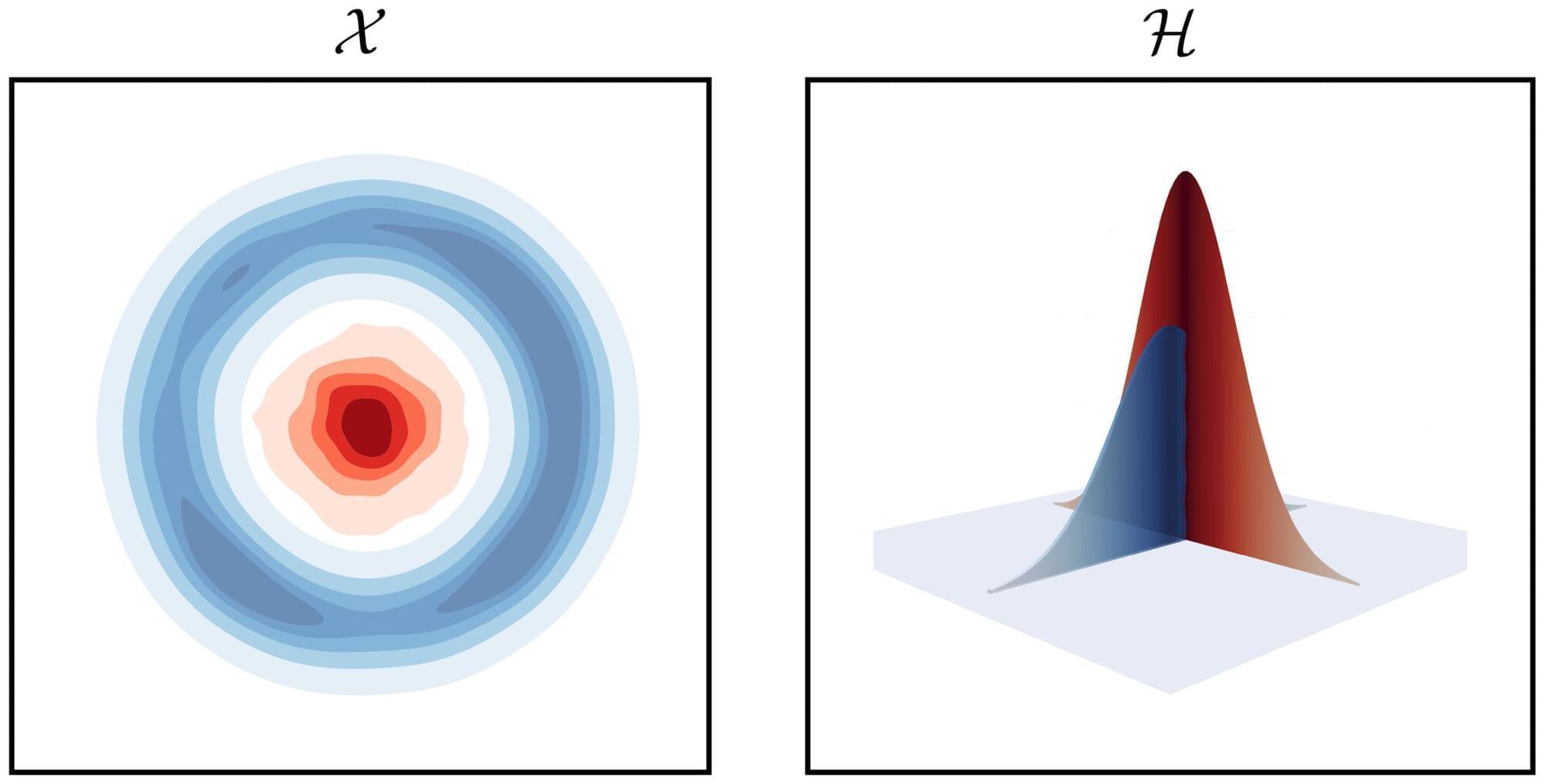

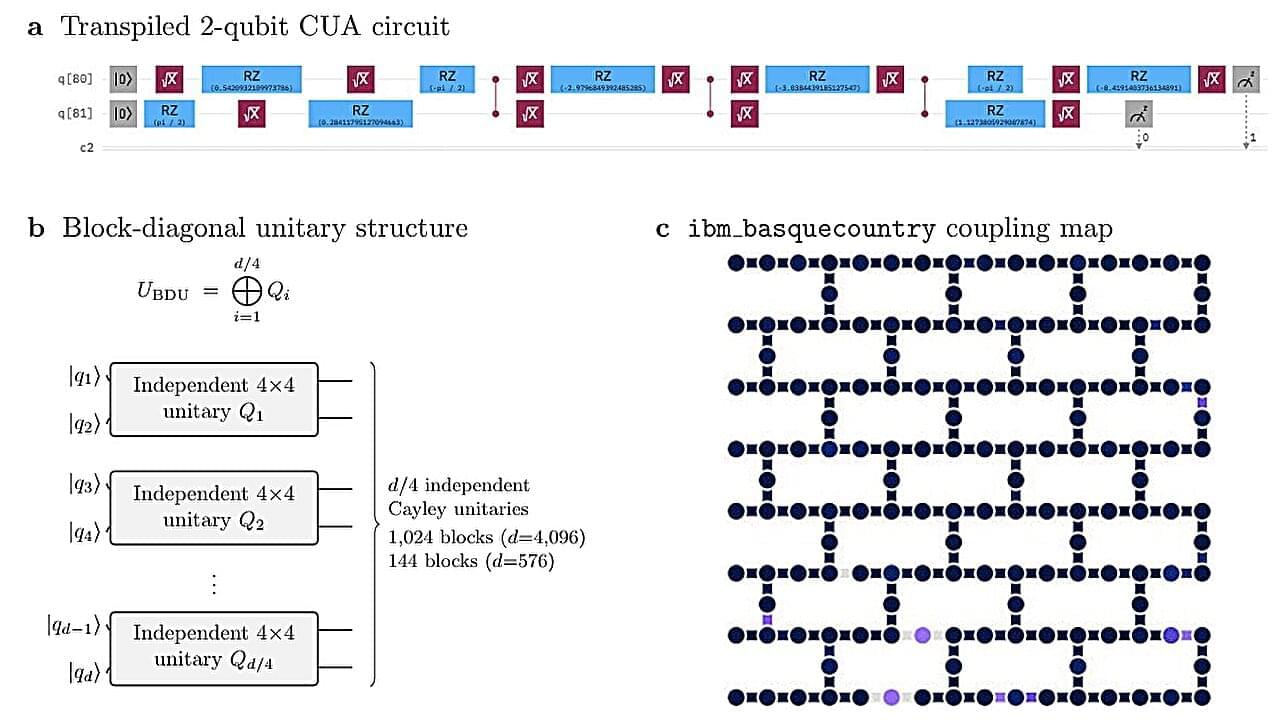

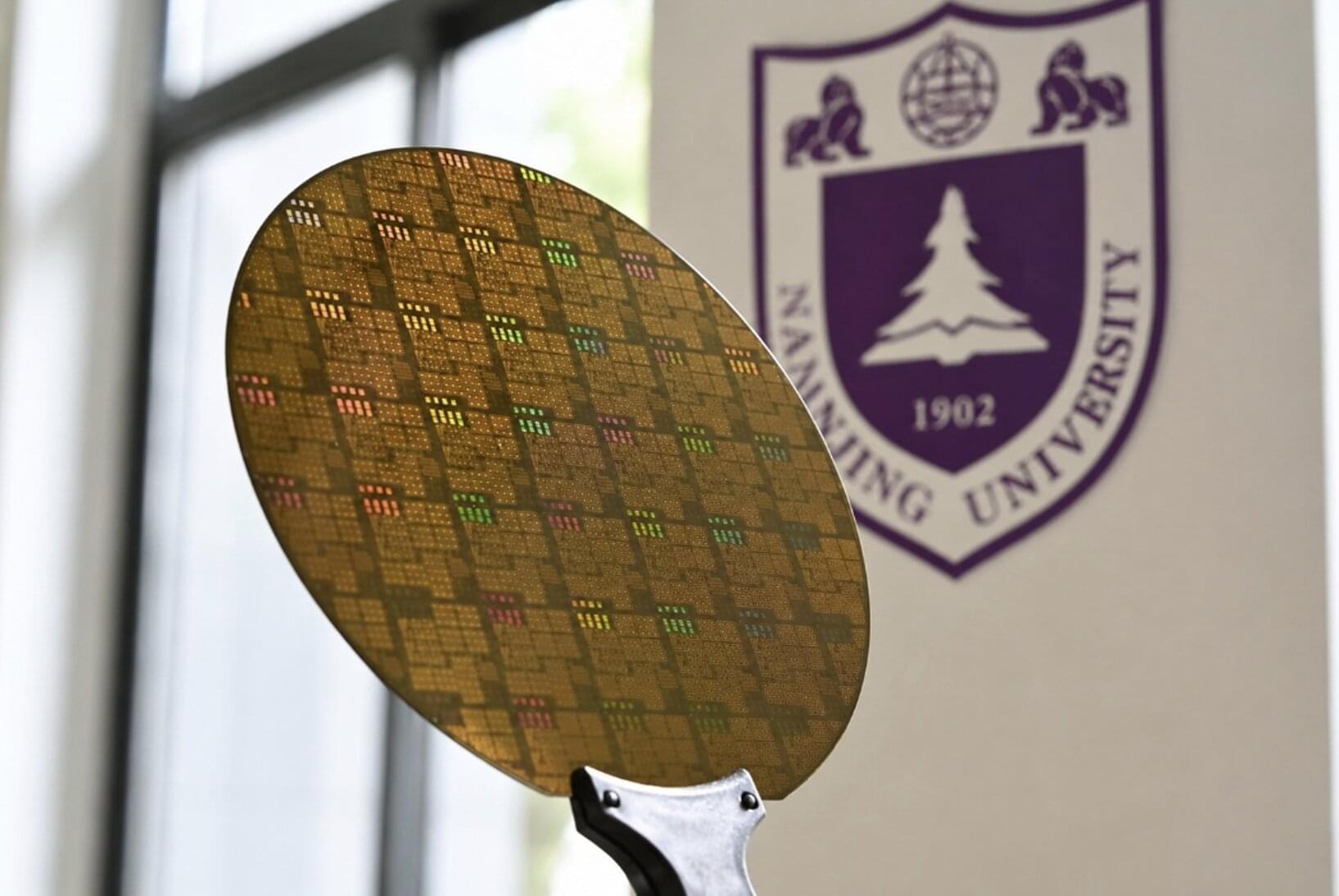

A team led by Hava Siegelmann, Provost Professor in the Manning College of Information and Computer Sciences at UMass Amherst, has developed a novel AI that more closely mirrors key aspects of how the human brain operates. Siegelmann and her lab have focused on two complementary goals: enabling AI systems to learn continuously in real time rather than only during a fixed training phase, and dramatically reducing the energy required for intelligent computation.

“Current AI systems are extraordinarily powerful, but they are also extraordinarily energy-hungry,” said Siegelmann. “Our work shows that it is possible to design AI that remains highly capable while operating much more efficiently.”