Ilya Sutskever, co-founder of OpenAI and founder of Safe Superintelligence, says the scaling era from 2020 to 2025 is over, that pre-training will run out of data, and that the industry is back to pure research with more companies than ideas. He argues that AGI is the wrong target what is actually coming is a learning algorithm that can take any job, learn it on the fly, and merge that knowledge across millions of simultaneous instances in a way humans cannot, producing rapid economic growth that regulation is unlikely to stop.

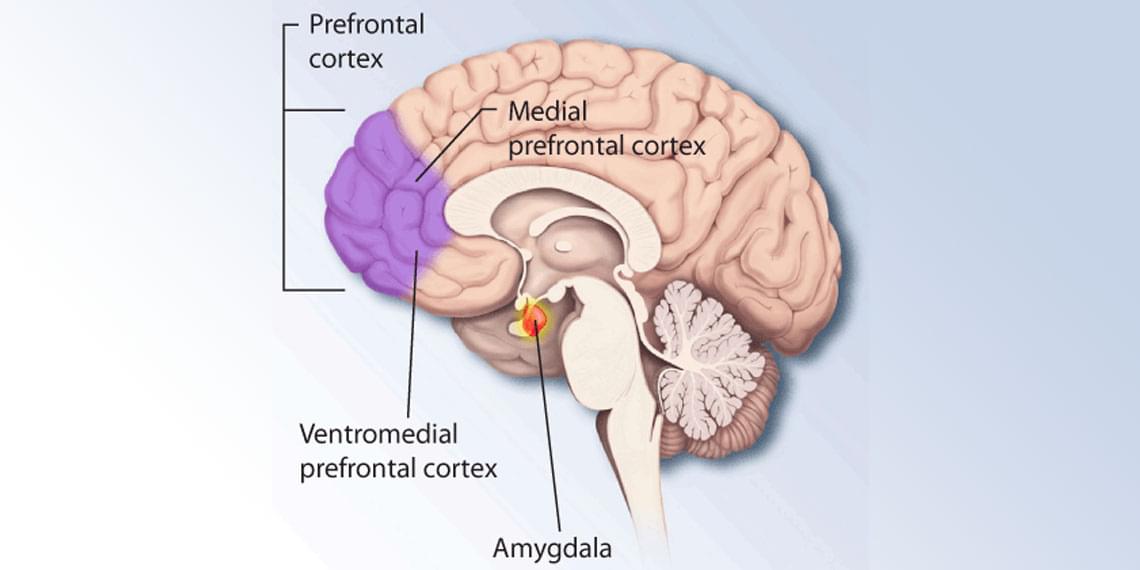

He predicts that once AI becomes visibly powerful, frontier companies will become paranoid overnight and governments will scramble, and says the only thing worth building is an AI aligned to sentient life broadly — not human life alone — because the AI itself will be sentient and will vastly outnumber humans within 5 to 20 years.

📚 Sources cited in this video:

Safe Superintelligence Inc. – Company Overview https://ssi.inc.

OpenAI, Founding Charter and Mission https://openai.com/charter.

Ilya Sutskever, Google Scholar – Research Publications https://scholar.google.com/citations?…

- Future of Life Institute, AI Sentience and Moral Status https://futureoflife.org/cause-area/a…

⚠️ DISCLAIMER: This channel provides AI commentary and analysis for educational and informational purposes only. Views expressed by guests are their own and do not represent the positions of any company or institution. We encourage viewers to consult multiple sources and form their own conclusions. #ai #agi #artificialintelligence.