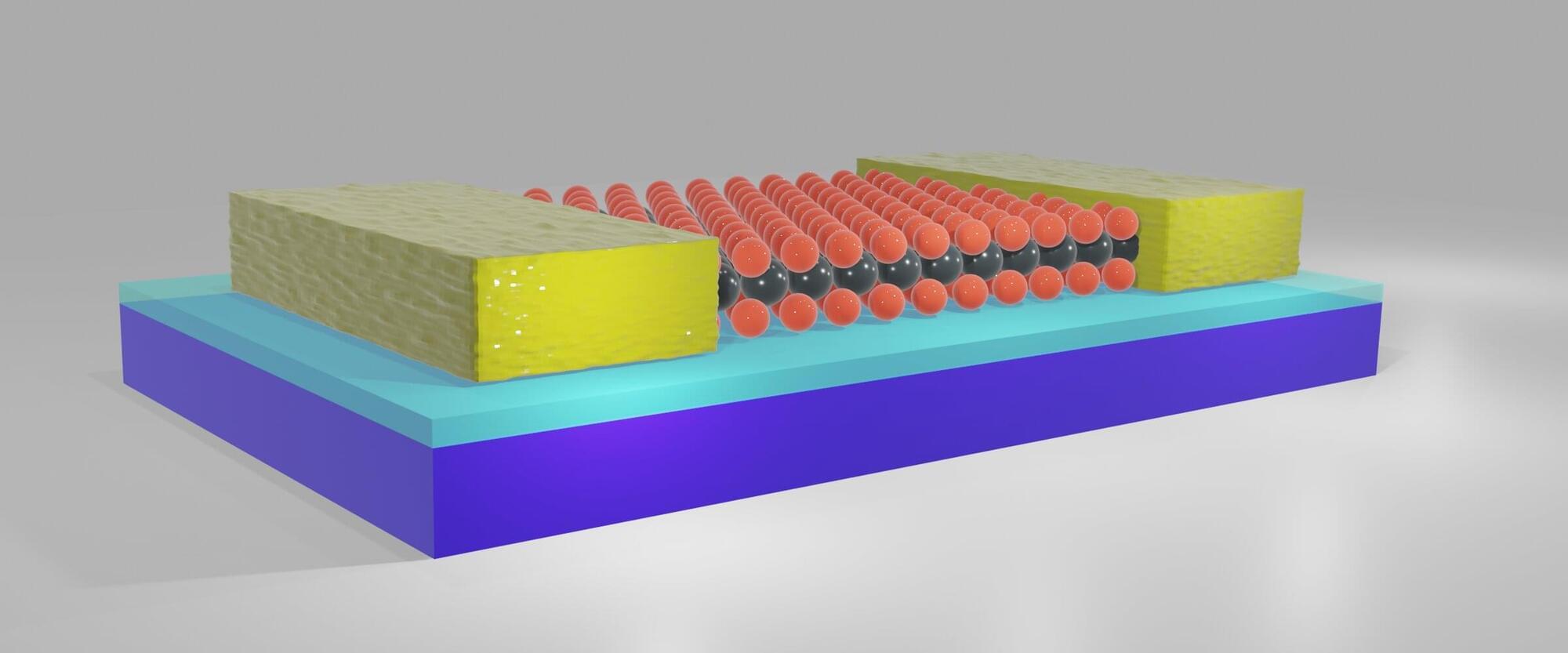

Transistors, small devices that can amplify or switch electrical signals, are central components of all modern computer chips and digital devices. There are two main types of transistors, known as n-type and p-type transistors.

N-type transistors conduct current using electrons (i.e., negatively charged particles), while p-type transistors utilize electron holes (i.e., positively charged spaces in a crystal lattice without electrons).

Electronics engineers worldwide have been exploring different solutions that could help reduce the size of existing transistors without compromising their performance, which could enable the further miniaturization of electronic devices. One promising route is to fabricate transistors using two-dimensional (2D) semiconductors, semiconducting materials that are just a single atom or a few atoms thick.