Artificial intelligence is not replacing human intuition in these fields, but reimagining how questions are asked, explored and understood.

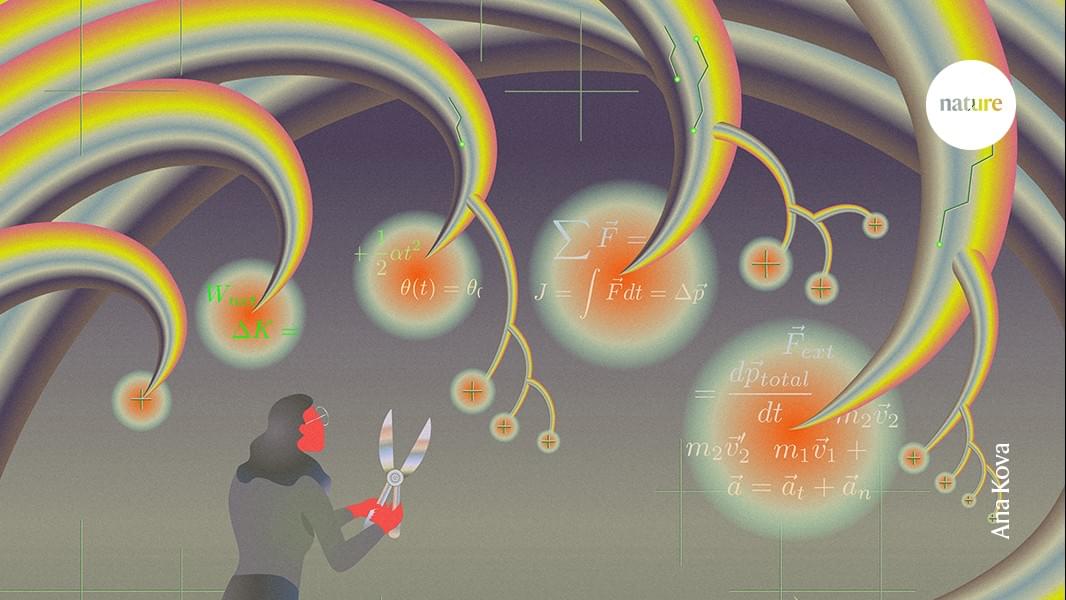

A new theoretical study may have cracked one of the most puzzling discoveries of the James Webb Space Telescope (JWST): Little Red Dots, spotted across the early universe. The paper, posted to the arXiv preprint server on May 29, argues that these objects could be black holes caught in rare, violent bursts of feeding at a rate exceeding theoretical limits.

Since JWST began its survey of the deep universe, astronomers have been puzzled by a class of tiny, faint objects appearing in the early universe in far greater numbers than expected. They have a distinctive V-shaped spectrum—bright in both ultraviolet and optical light, but with a dip in between—along with broad emission lines hinting at active black holes. They also show an absence of X-ray, radio and infrared emission.

They don’t look like ordinary galaxies, and they don’t completely look like quasars, either. What they are has been an open question. Some researchers argue that Little Red Dots may need some outside-the-box physics to explain their origin and nature.

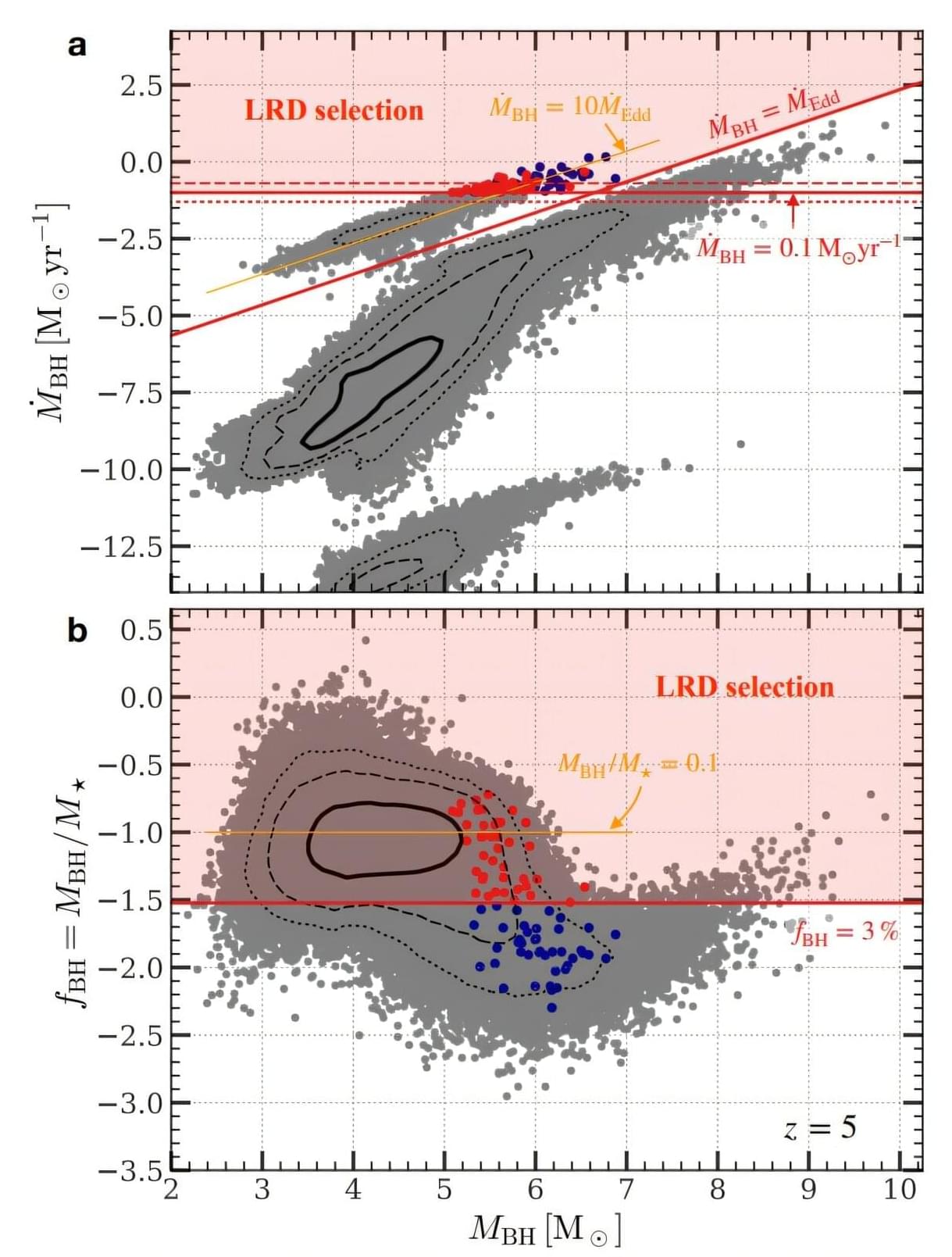

Using a novel simulation model based on machine learning, an international research team at GSI/FAIR has succeeded in gaining a deeper understanding of element formation in stellar events such as neutron star mergers. For the first time, the scientists used deep learning with a neural network to model the energy release during r-process nucleosynthesis in hydrodynamic simulations. The results are published in the journal Physical Review D.

Many of the chemical elements we know are created in massive stellar events such as exploding stars or neutron star mergers. These events release incredible amounts of energy, allowing for the production of heavy nuclides. One key nuclear production process is the so-called rapid neutron-capture process, or r-process, in which free neutrons are captured by existing nuclei and converted into protons—thus creating larger, heavier atomic nuclei.

“Researchers around the world strive to make these complex reactions understandable through theoretical simulations. However, modeling all parameters requires incredible computing power, which is why the models often have to be simplified,” said Dr. Oliver Just, first author of the publication and a researcher in the Nuclear Astrophysics & Structure Department at GSI/FAIR. “Our new model, RHINE, which uses artificial intelligence, offers an efficient alternative.”

Every commercial nuclear reactor in the world runs on uranium. Uranium brings three undeniable problems. It creates weapons-grade plutonium. It melts down under pressure. Its radioactive waste lasts for tens of thousands of years.

Thorium solves all three.

Physicists have known this since the 1960s. The United States actually built a working thorium reactor. They proved the technology was viable. Then they deliberately abandoned it.

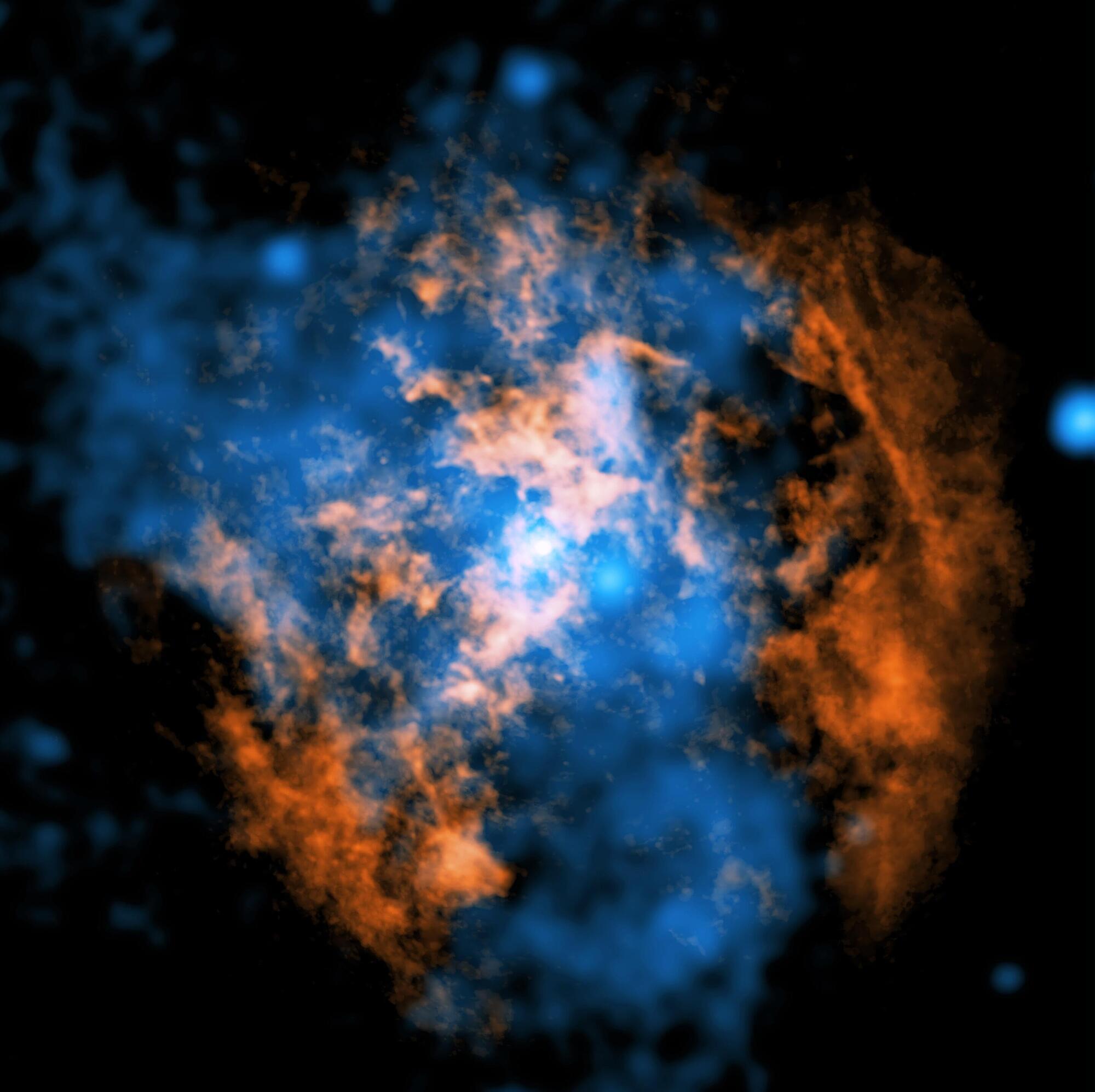

The hunt is over. After more than 50 years of searching, astrophysicists at Northwestern University have finally discovered evidence of a powerful wind blowing from the Milky Way’s central supermassive black hole, Sagittarius A* (Sgr A•.

According to theoretical physics and a long-accepted understanding of galaxies’ evolution, as black holes consume materials, they should produce wind or jets. Even a small amount of gas falling into a black hole should generate enough energy to push material outwards. Without wind, Sgr A* would be a unique outlier.

But, until now, no one could find it.

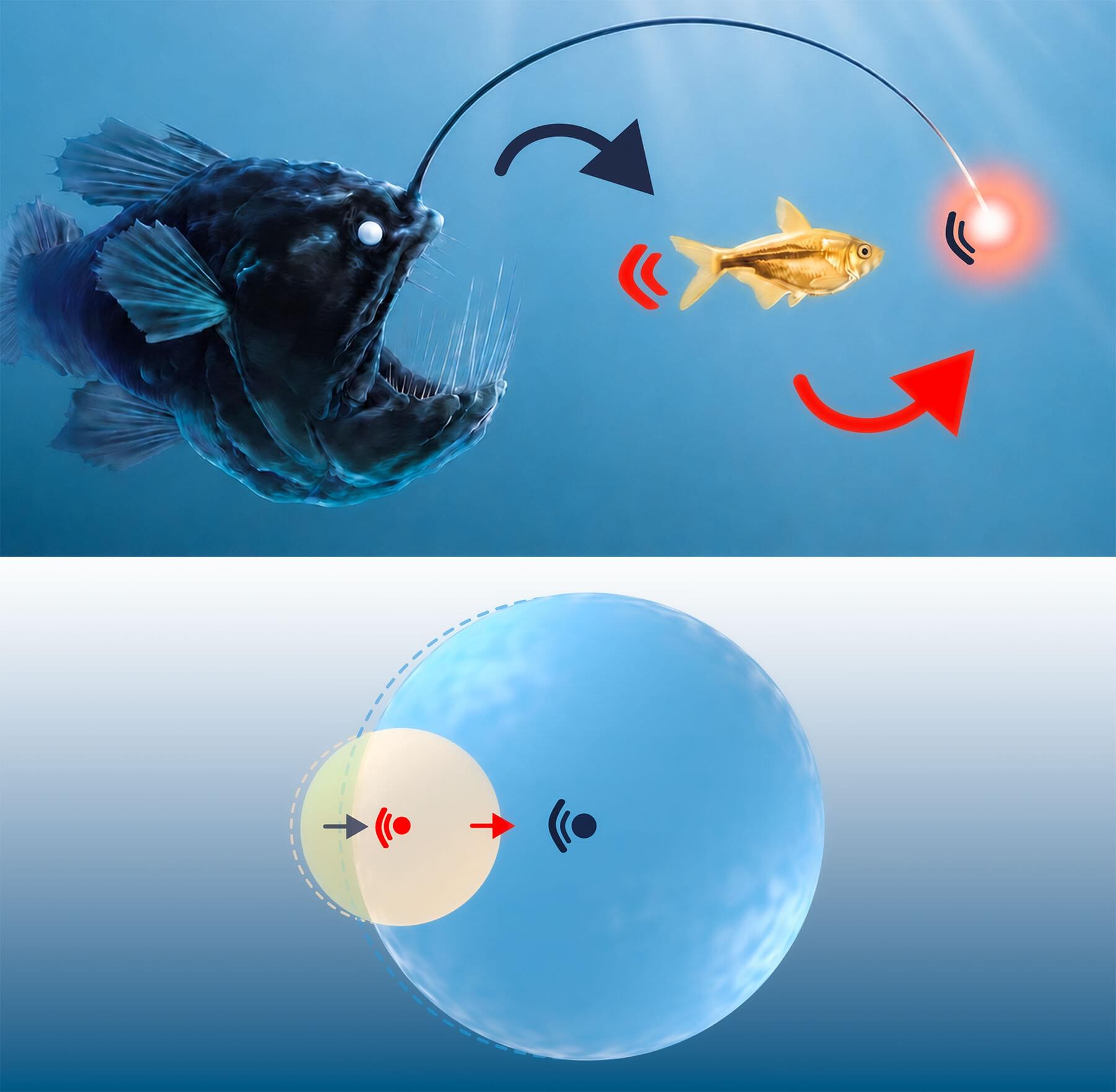

Inside cells, certain functions are carried out by locally adjusting molecular composition. This condensation of material results in the formation of dense droplets that can dynamically rearrange. Because of this, interactions between such dense regions determine the shaping of condensates. Scientists from the Department of Living Matter Physics at MPI-DS recently developed a model that can describe such phase separation dynamics based solely on attraction. The work is published in the journal Physical Review Letters.

“It’s natural to think that a system with only attractive forces would form one large, stationary condensate,” explained Jacopo Romano, first author of the study.

“However, instead we observed an unexpected emergent property of chasing dynamics resulting in movement and propulsion,” he said.

For 72 hours, enjoy 15% OFF on all Hoverpens with code PBS, or click the link https://noviumdesign.shop/PBS — Free shipping to most countries. Also on Amazon: https://noviumdesign.shop/crQH4A

We’ve been looking for messages from the stars ever since Frank Drake pointed the Green Bank radio telescope at Tau Ceti and Epsilon Eridany 65 years ago. He saw nothing that couldn’t be explained by natural causes. Nor have the much more extensive SETI surveys conducted since. So, maybe there are no alien signals to see. Or maybe we need to update how we search for them. We have, after all, learned an awful lot since 1960—both about the galaxy and about observing the galaxy.

Sign Up on Patreon to get access to the Space Time Discord! / pbsspacetime.

Check out the Space Time Merch Store https://www.pbsspacetime.com/shop.

Sign up for the mailing list to get episode notifications and hear special announcements! https://mailchi.mp/1a6eb8f2717d/space… the Entire Space Time Library Here: https://search.pbsspacetime.com/ Hosted by Matt O’Dowd Written by Matt O’Dowd Post Production by Leonardo Scholzer Directed by Andrew Kornhaber Associate Producer: Bahar Gholipour Executive Producer: Andrew Kornhaber Executive in Charge for PBS: Maribel Lopez Director of Programming for PBS: Gabrielle Ewing Assistant Director of Programming for PBS: Mike Martin Spacetime is a production of Kornhaber Brown for PBS Digital Studios. This program is produced by Kornhaber Brown, which is solely responsible for its content. © 2026 PBS. All rights reserved. End Credits Music by J.R.S. Schattenberg: / multidroideka Space Time Was Made Possible In Part By: Big Bang Alexander Tamas Filip Rolenec Juan Benet Kenneth See Mark Rosenthal Matthew Ocko Morgan Hough Peter Barrett Vinnie Falco Daniel Muzquiz Quasar Ethan Cohen Glenn Sugden Grace Biaelcki Justin Lloyd Mark Heising Rad Antonov Shaun Williams Stephen Wilcox Tristan Lucian Claudius Aurelius Tyacke Hypernova Alex Kern Ben Delo Chuck Zegar Dean Galvin Donal Botkin Gregory Forfa Jeff White John R. Slavik Massimiliano Pala PAUL C PEDERSEN Scott Gorlick Scott Gray Spencer Jones Vlad Shipulin Zachary Haberman Антон Кочков Gamma Ray Burst Alex Gan aaron pinto Almog Cohen Anthony Leon Arko Provo Mukherjee Ayden Miller Bradley Jenkins Bradley Ulis Brandon Lattin Brian Cook Chris Liao Christopher Wade Chuck Lukaszewski Collin Dutrow Craig Falls Craig Stonaha Dan Warren Daniel Donahue Daniel Jennings Darrell Stewart David Giltinan David Johnston Doyle Vann Eric Kiebler Eric Raschke Eric Schrenker Faraz Khan Frederic Simon gmmiddleton Harsh Khandhadia Isaac Suttell James Trimmier Jason Bowen Jeb Campbell Jeff Harris Jeremy Soller Jerry Thomas jim bartosh John Anderson John De Witt John Funai John H. Austin, Jr. Joseph Salomone Junaid Ali Kacper Cieśla Kane Holbrook Kent Durham Koen Wilde Kyle Atkinson Lori Ferris Marcelo Garcia Marion Lang Mark Daniel Cohen Mark Delagasse Matt Kaprocki Matt Quinn Matthew Johnson Michael Barton Michael Clark Michael Lev Michael Purcell Mikk Mihkel Nurges Nick Hoffenstoffer III Nicolas Katsantonis Onemind Param Saxena Paul Wood Rad Antonov Reuben Brewer Richard Steenbergen Robert DeChellis Ross Kennedy Ross Story Russell Moore SamSword Sandhya Devi Sean Owen Shane Calimlim SilentGnome Terje Vold Thomas Dougherty Todd J Lerner Tybie Fitzhugh Zac Sweers.

Search the Entire Space Time Library Here: https://search.pbsspacetime.com/

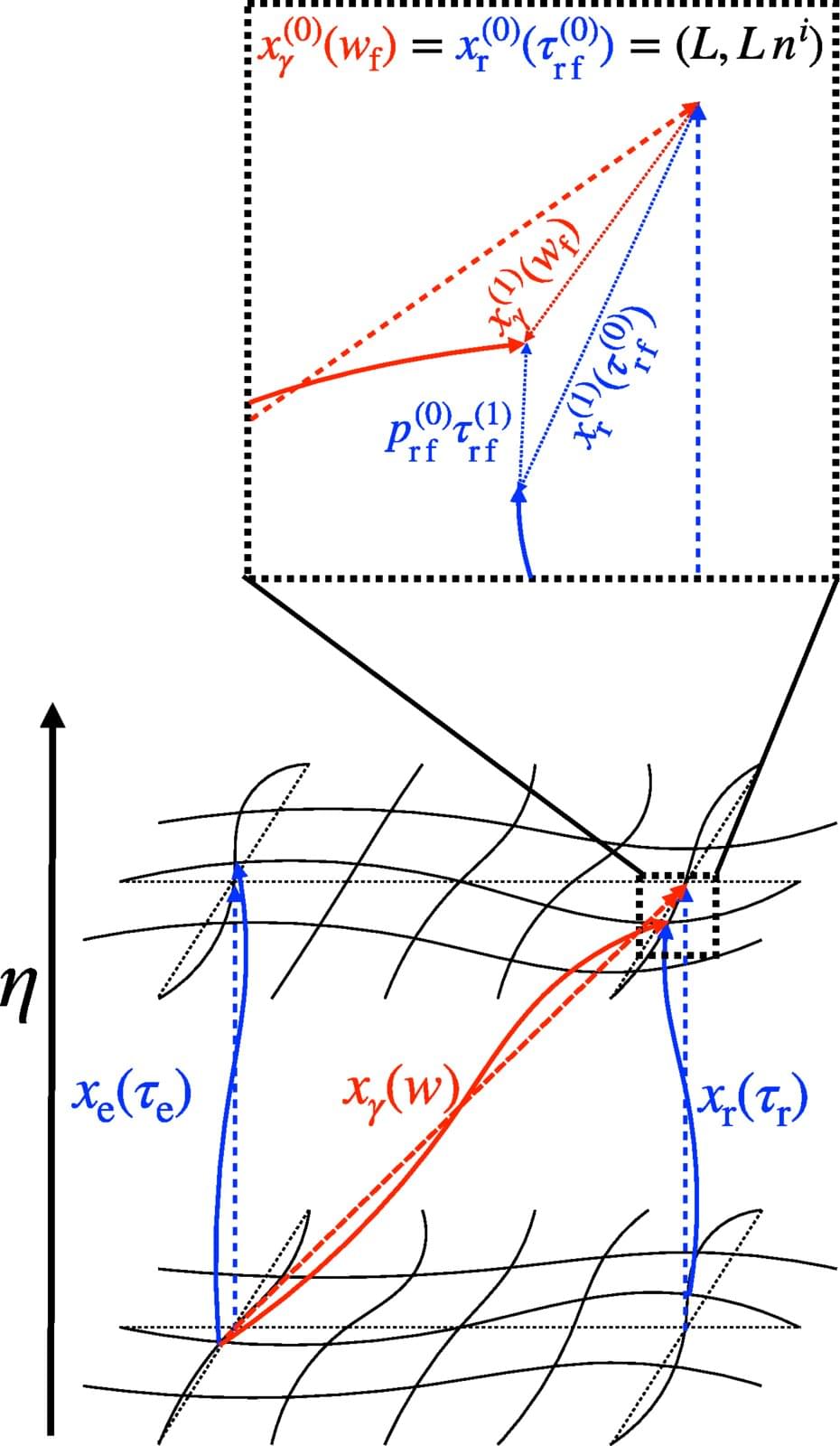

Gravitational waves are tiny ripples in spacetime. Their first direct detection in 2015 marked a revolutionary moment in astronomy. Today, we have a thorough understanding of signals that travel far from their sources through quiet, nearly empty space, such as those emitted when black holes merge. In this case, the wave can be considered a minor disturbance on a silent background. The distinction between “background” and “wave” is clear, and the quantity measured by the detector—a tiny stretching and squeezing—is clearly determined.

In cosmology, however, things are more subtle. The focus shifts to the universe in its entirety—encompassing spacetime and everything contained within it, such as stars, black holes and galaxies. The background itself is dynamic. Small fluctuations in density and velocity gently stir spacetime everywhere, blurring the boundary with the wave.

But what exactly does a gravitational-wave detector measure when the entire universe is gently vibrating? Previously, theoretical predictions were entirely dependent on the choice of mathematical coordinates. However, the only meaningful quantity is what a real instrument records, which must be coordinate-independent.

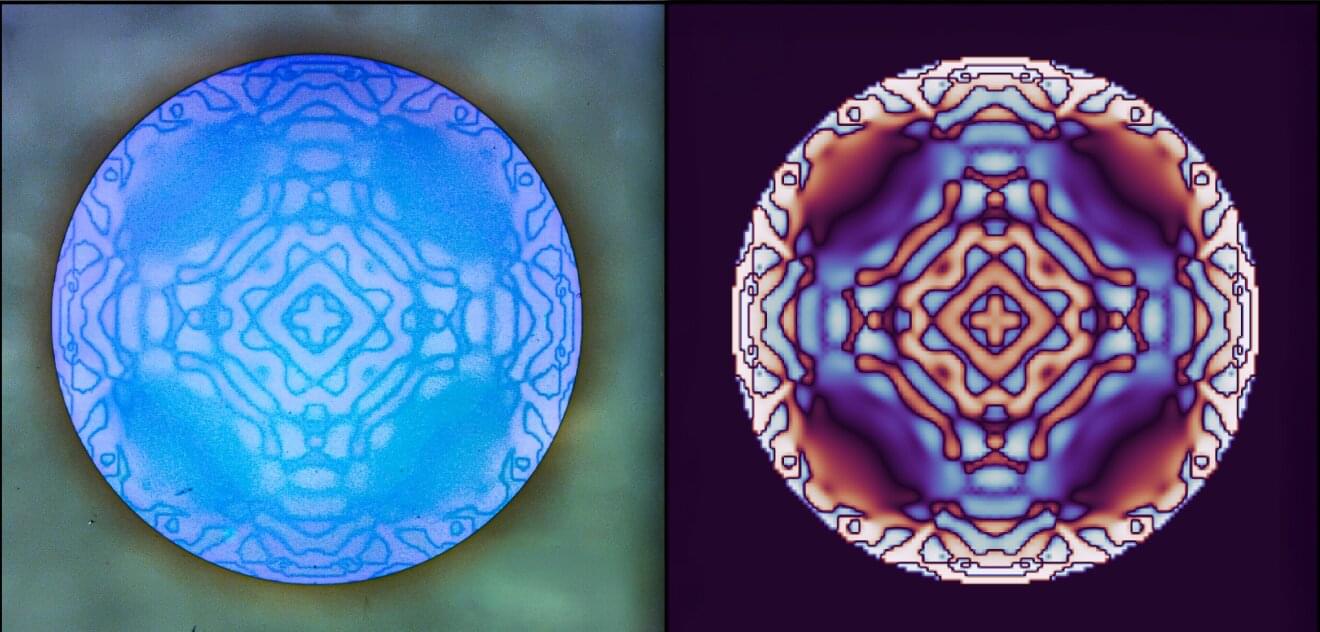

Blurry light from lens imperfections is a problem everywhere, from microscopes to telescopes to smartphone cameras. Using a tiny yet carefully engineered optical element and artificial intelligence, University of California San Diego engineers have built a way to spot and correct those distortions from a single image—a step that could make advanced optical systems faster, smaller and easier to use.

“We used a combination of fundamental physics, nanofabrication and machine learning to make hidden distortions easier to detect and correct,” said senior author Abdoulaye Ndao, an electrical and computer engineering faculty member in the Jacobs School of Engineering and an affiliate of the Qualcomm Institute at UC San Diego.

“Our fast, robust solution is tiny and easy to integrate into different optical systems,” he continued. “The weight is almost nothing, because the size of the sample can be one by one centimeter and half a millimeter thick.”

Birgitta Schultze-Bernhardt and her team at the Institute of Experimental Physics at Graz University of Technology (TU Graz) have developed a new type of UV dual-comb spectrometer that detects gaseous air pollutants with unrivaled accuracy and sensitivity. Using ultraviolet double laser light, the device measures the concentration of harmful gases such as formaldehyde within half a second.

Thanks to its compact design and a measuring range of up to two and a half kilometers, the spectrometer is not only suitable for laboratory analyses, but also for mobile measurements in cities, industrial areas and agricultural regions.

The work is published in the journal PhotoniX.