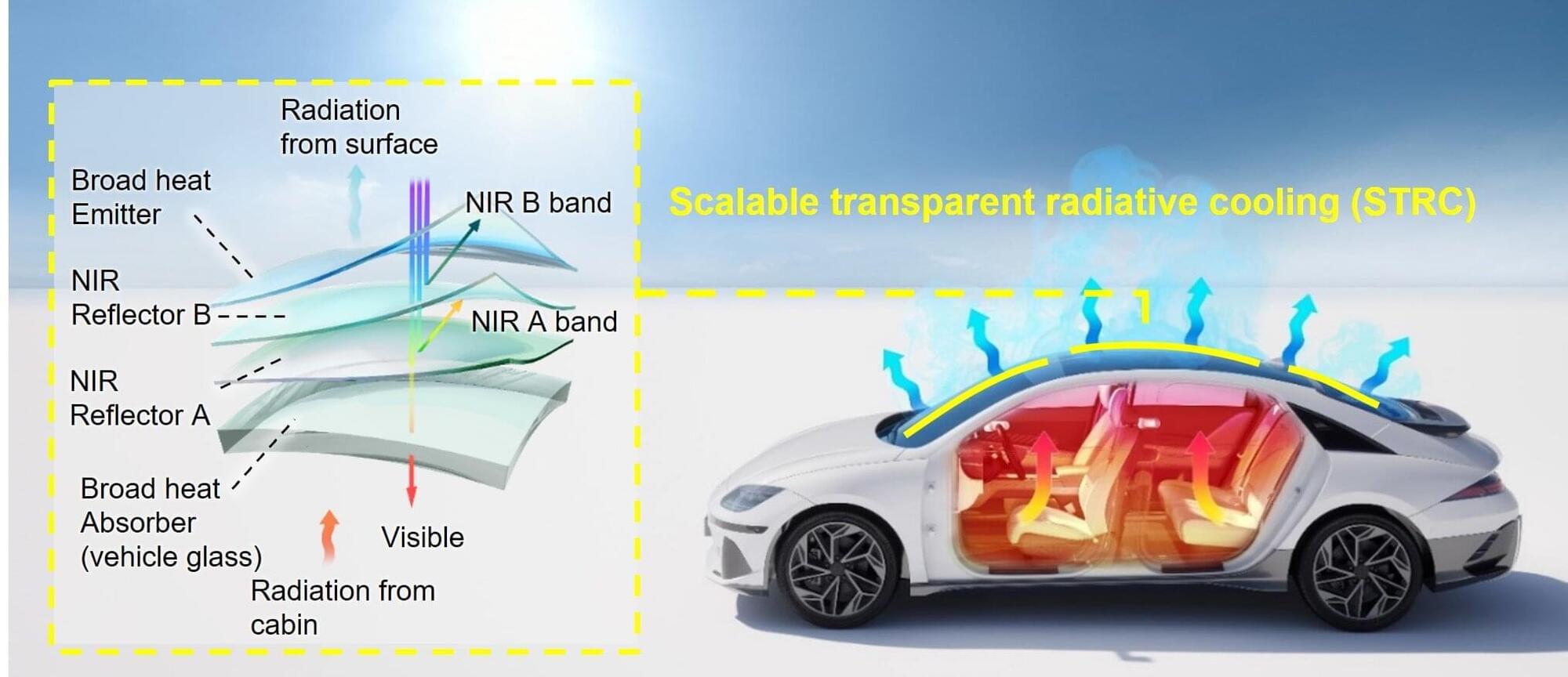

A transparent radiative cooling film technology that dissipates heat directly to the outside without consuming electricity has been developed to reduce vehicle overheating during summer. The technology was validated through real-vehicle experiments conducted under diverse conditions—including different countries, seasons, and both parking and driving scenarios—and demonstrated the ability to lower cabin temperatures by up to 6.1°C and reduce cooling energy consumption by more than 20%.

Seoul National University College of Engineering announced that a research team led by Prof. Seung Hwan Ko (Department of Mechanical Engineering, SNU), in collaboration with Prof. Gang Chen at MIT and research teams from Hyundai Motor Company and Kia (Materials Research & Engineering Center and Thermal Energy Total Development Group), has designed and fabricated a large-area Scalable Transparent Radiative Cooling (STRC) film applicable to vehicle windows. Through real-vehicle evaluations conducted under various climatic and driving conditions, the team demonstrated both energy-saving and carbon reduction effects.

This research was published online on February 4 in the journal Energy & Environmental Science.