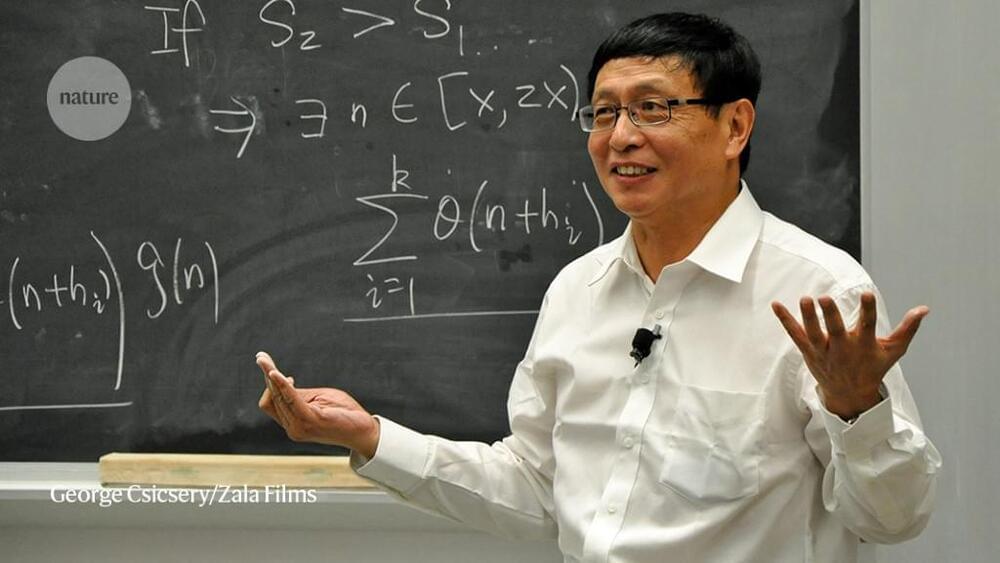

After shocking the mathematics community with a major result in 2013, Yitang Zhang now says he has solved an analogue of the celebrated Riemann hypothesis.

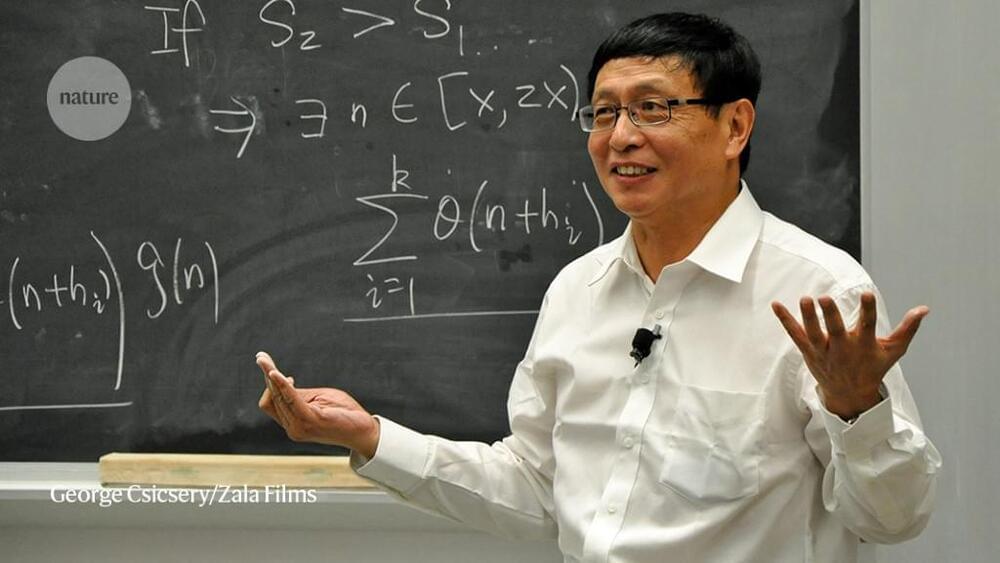

Spin glasses are alloys formed by noble metals in which a small amount of iron is dissolved. Although they do not exist in nature and have few applications, they have nevertheless been the focus of interest of statistical physicists for some 50 years. Studies of spin glasses were crucial for Giorgio Parisi’s 2021 Nobel Prize in Physics.

The scientific interest of spin glasses lies in the fact that they are an example of a complex system whose elements interact with each other in a way that is sometimes cooperative and sometimes adversarial. The mathematics developed to understand their behavior can be applied to problems arising in a variety of disciplines, from ecology to machine learning, not to mention economics.

Spin glasses are magnetic systems, that is, systems in which individual elements, the spins, behave like small magnets. Their peculiarity is the co-presence of ferromagnetic-type bonds, which tend to align the spins, with antiferromagnetic-type bonds, which tend to orient them in opposite directions.

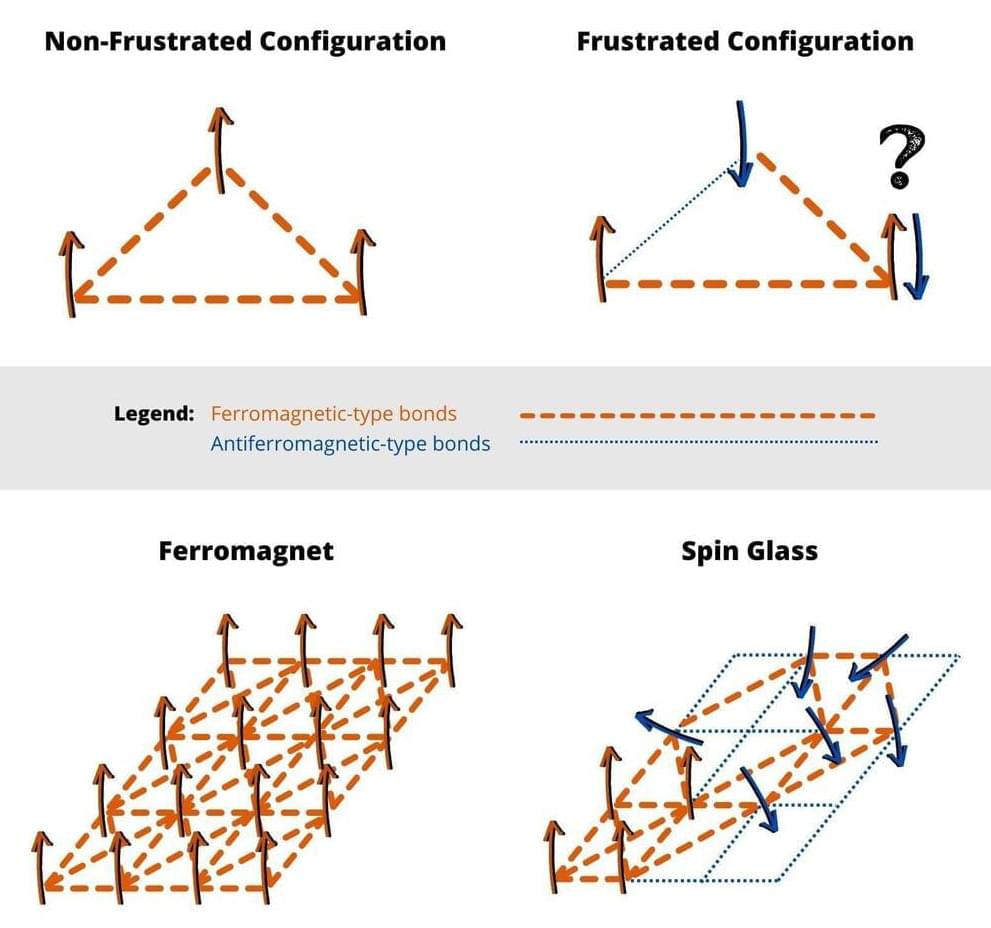

In 1994, the computer scientist Peter Shor discovered that if quantum computers were ever invented, they would decimate much of the infrastructure used to protect information shared online. That frightening possibility has had researchers scrambling to produce new, “post-quantum” encryption schemes, to save as much information as they could from falling into the hands of quantum hackers.

Earlier this year, the National Institute of Standards and Technology revealed four finalists in its search for a post-quantum cryptography standard. Three of them use “lattice cryptography” — a scheme inspired by lattices, regular arrangements of dots in space.

Lattice cryptography and other post-quantum possibilities differ from current standards in crucial ways. But they all rely on mathematical asymmetry. The security of many current cryptography systems is based on multiplication and factoring: Any computer can quickly multiply two numbers, but it could take centuries to factor a cryptographically large number into its prime constituents. That asymmetry makes secrets easy to encode but hard to decode.

Like most physicists, I spent much of my career ignoring the majority of quantum mechanics. I was taught the theory in graduate school and applied the mechanics here and there when an interesting problem required it … and that’s about it.

Despite its fearsome reputation, the mathematics of quantum theory is actually rather straightforward. Once you get used to the ins and outs, it’s simpler to solve a wide variety of problems in quantum mechanics than it is in, say, general relativity. And that ease of computation—and the confidence that goes along with wielding the theory—mask most of the deeper issues that hide below the surface.

Deeper issues like the fact that quantum mechanics doesn’t make any sense. Yes, it’s one of the most successful (if not the most successful) theories in all of science. And yes, a typical high school education will give you all the mathematical tools you need to introduce yourself to its inner workings. And yes, for over a century we have failed to come up with an alternative theory of the subatomic universe. Those are all true statements, and yet: Quantum mechanics doesn’t make any sense.

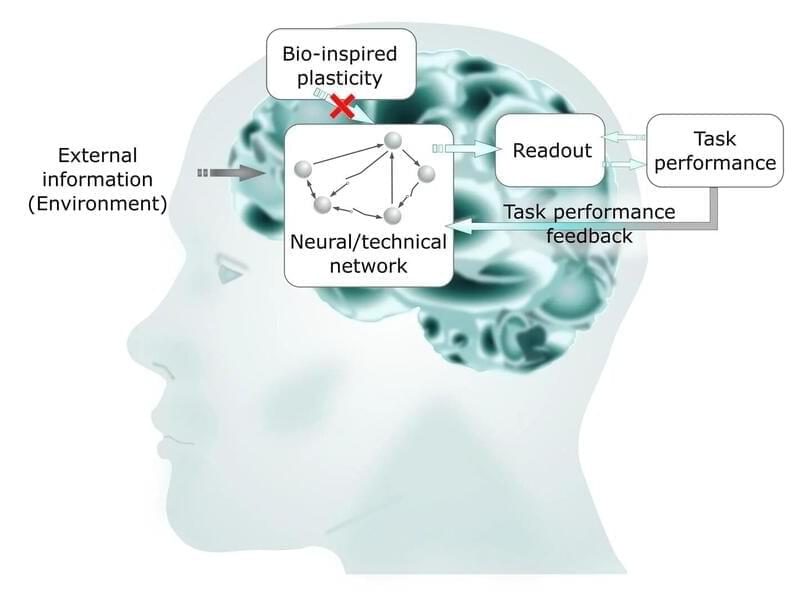

With mathematical modeling, a research team has now succeeded in better understanding how the optimal working state of the human brain, called criticality, is achieved. Their results mean an important step toward biologically-inspired information processing and new, highly efficient computer technologies and have been published in Scientific Reports.

“In particular tasks, supercomputers are better than humans, for example in the field of artificial intelligence. But they can’t manage the variety of tasks in everyday life —driving a car first, then making music and telling a story at a get-together in the evening,” explains Hermann Kohlstedt, professor of nanoelectronics. Moreover, today’s computers and smartphones still consume an enormous amount of energy.

“These are no sustainable technologies—while our brain consumes just 25 watts in everyday life,” Kohlstedt continues. The aim of their interdisciplinary research network, “Neurotronics: Bio-inspired Information Pathways,” is therefore to develop new electronic components for more energy-efficient computer architectures. For this purpose, the alliance of engineering, life and natural sciences investigates how the human brain is working and how that has developed.

An in-depth survey of the various technologies for spaceship propulsion, both from those we can expect to see in a few years and those at the edge of theoretical science. We’ll break them down to basics and familiarize ourselves with the concepts.

Note: I made a rather large math error about the Force per Power the EmDrive exerts at 32:10, initial tentative results for thrust are a good deal higher than I calculated compared to a flashlight.

Visit the sub-reddit:

https://www.reddit.com/r/IsaacArthur/

Join the Facebook Group:

https://www.facebook.com/groups/1583992725237264/

Visit our Website:

http://www.isaacarthur.net.

Support the Channel on Patreon:

https://www.patreon.com/IsaacArthur.

Listen or Download the audio of this episode from Soundcloud:

His work will be published soon.

Shanghai-born Zhang Yitang is a professor of mathematics at the University of California, Santa Barbara. If a 111-page manuscript allegedly written by him passes peer review, he might become the first person to solve the Riemann hypothesis, The South China Morning Post (SCMP)

The Riemann hypothesis is a 150-year-old puzzle that is considered by the community to be the holy grail of mathematics. Published in 1,859, it is a fascinating piece of mathematical conjecture around prime numbers and how they can be predicted.

Riemann hypothesized that prime numbers do not occur erratically but rather follow the frequency of an elaborate function, which is called the Riemann zeta function. Using this function, one can reliably predict where prime numbers occur, but more than a century later, no mathematician has been able to prove this hypothesis.

face_with_colon_three circa 2016.

Two basic types of encryption schemes are used on the internet today. One, known as symmetric-key cryptography, follows the same pattern that people have been using to send secret messages for thousands of years. If Alice wants to send Bob a secret message, they start by getting together somewhere they can’t be overheard and agree on a secret key; later, when they are separated, they can use this key to send messages that Eve the eavesdropper can’t understand even if she overhears them. This is the sort of encryption used when you set up an online account with your neighborhood bank; you and your bank already know private information about each other, and use that information to set up a secret password to protect your messages.

The second scheme is called public-key cryptography, and it was invented only in the 1970s. As the name suggests, these are systems where Alice and Bob agree on their key, or part of it, by exchanging only public information. This is incredibly useful in modern electronic commerce: if you want to send your credit card number safely over the internet to Amazon, for instance, you don’t want to have to drive to their headquarters to have a secret meeting first. Public-key systems rely on the fact that some mathematical processes seem to be easy to do, but difficult to undo. For example, for Alice to take two large whole numbers and multiply them is relatively easy; for Eve to take the result and recover the original numbers seems much harder.

Public-key cryptography was invented by researchers at the Government Communications Headquarters (GCHQ) — the British equivalent (more or less) of the US National Security Agency (NSA) — who wanted to protect communications between a large number of people in a security organization. Their work was classified, and the British government neither used it nor allowed it to be released to the public. The idea of electronic commerce apparently never occurred to them. A few years later, academic researchers at Stanford and MIT rediscovered public-key systems. This time they were thinking about the benefits that widespread cryptography could bring to everyday people, not least the ability to do business over computers.

Los Alamos National Laboratory researchers have developed a novel method for comparing neural networks that looks into the “black box” of artificial intelligence to help researchers comprehend neural network behavior. Neural networks identify patterns in datasets and are utilized in applications as diverse as virtual assistants, facial recognition systems, and self-driving vehicles.

“The artificial intelligence research community doesn’t necessarily have a complete understanding of what neural networks are doing; they give us good results, but we don’t know how or why,” said Haydn Jones, a researcher in the Advanced Research in Cyber Systems group at Los Alamos. “Our new method does a better job of comparing neural networks, which is a crucial step toward better understanding the mathematics behind AI.”

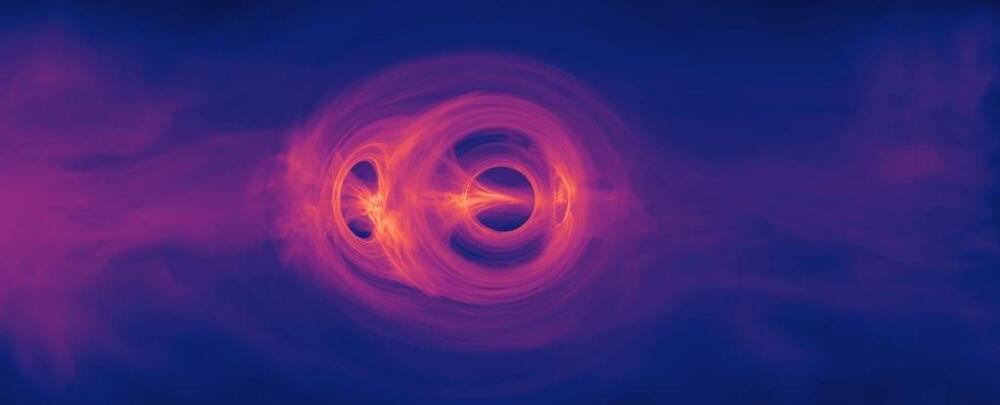

For the better part of a century, quantum physics and the general theory of relativity have been a marriage on the rocks. Each perfect in their own way, the two just can’t stand each other when in the same room.

Now a mathematical proof on the quantum nature of black holes just might show us how the two can reconcile, at least enough to produce a grand new theory on how the Universe works on cosmic and microcosmic scales.

A team of physicists has mathematically demonstrated a weird quirk concerning how these mind-bendingly dense objects might exist in a state of quantum superposition, simultaneously occupying a spectrum of possible characteristics.