A new quantum-inspired algorithm is reshaping how scientists approach some of the most complex materials known, enabling rapid analysis of structures that were previously beyond computational reach.

Check out the Universe in a Black Hole Merch at the Space Time Merch Store

https://www.pbsspacetime.com/shop.

Kurt Gödel discovered a solution to General Relativity that allows time travel without any exotic physics, revealing that the theory doesn’t actually guarantee a consistent chain of cause and effect. His “Gödel universe” shows that under certain conditions, the structure of spacetime itself can loop back on itself—blurring the line between past and future and exposing a deep limitation in our understanding of reality.

Sign Up on Patreon to get access to the Space Time Discord!

/ pbsspacetime.

Sign up for the mailing list to get episode notifications and hear special announcements!

https://mailchi.mp/1a6eb8f2717d/space…

Search the Entire Space Time Library Here: https://search.pbsspacetime.com/

Hosted by matt o’dowd written by matt o’dowd post production by leonardo scholzer directed by andrew kornhaber associate producer: bahar gholipour executive producer: andrew kornhaber executive in charge for PBS: maribel lopez director of programming for PBS: gabrielle ewing assistant director of programming for PBS: mike martin.

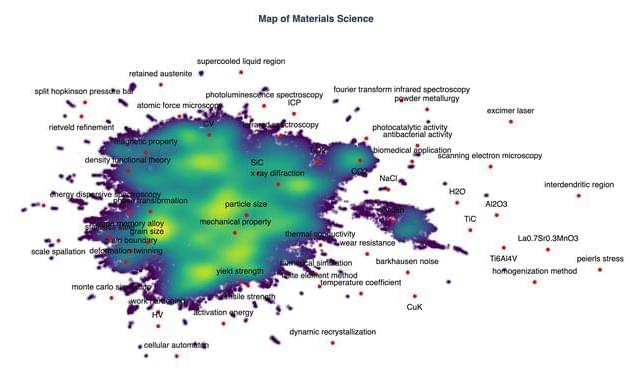

Large language models (LLMs) could help human scientists identify interesting research topics that have not previously been explored, say scientists at Germany’s Karlsruhe Institute of Technology (KIT). By analysing abstracts in materials science publications and mapping connections between different concepts, the model was able to generate predictions for future areas of interest that the KIT team says are more precise than those produced by traditional, rule-based algorithms.

The number of research articles published each year is increasing so quickly that it is impossible for scientists to keep up with everything, observes team leader Pascal Friederich, who heads a KIT research group on artificial intelligence for materials sciences. While experienced scientists know how to find connections between research areas within their field, identifying links between these and other, unfamiliar topics is a different story.

PBS Member Stations rely on viewers like you. To support your local station, go to: http://to.pbs.org/DonateSPACE

Sign Up on Patreon to get access to the Space Time Discord!

/ pbsspacetime.

In his essay “The Unreasonable Effectiveness of Mathematics”, the physicist Eugine Wigner said that “the enormous usefulness of mathematics in the natural sciences is something bordering on the mysterious”. This statement was inspired by the observation that so many aspects of the physical world seem to be describable and predictable by mathematical equations to incredible precision especially as quantum phenomena. But quantum phenomena have no subjective qualities and have questionable physicality. They seem to be completely describable by only numbers, and their behavior precisely defined by equations. In a sense, the quantum world is made of math. So does that mean the universe is made of math too? If you believe the Mathematical Universe Hypothesis then yes. And so are you.

#space #universe #maths.

Check out the Space Time Merch Store.

https://www.pbsspacetime.com/shop.

Sign up for the mailing list to get episode notifications and hear special announcements!

PBS Member Stations rely on viewers like you. To support your local station, go to: http://to.pbs.org/DonateSPACE

Take the Space Time Fan Survey Here: https://forms.gle/wS4bj9o3rvyhfKzUA

Sign Up on Patreon to get access to the Space Time Discord!

/ pbsspacetime.

If you used every particle in the observable universe to do a full quantum simulation, how big would that simulation be? At best a large molecule. That’s how insanely information dense the quantum wavefunction really is. And yet we routinely simulate systems with thousands, even millions of particles. How? By cheating. Using the ultimate compression algorithm: Density Functional Theory (DFT). Let’s learn how to cheat the universe.

Check out the Space Time Merch Store.

https://www.pbsspacetime.com/shop.

Sign up for the mailing list to get episode notifications and hear special announcements!

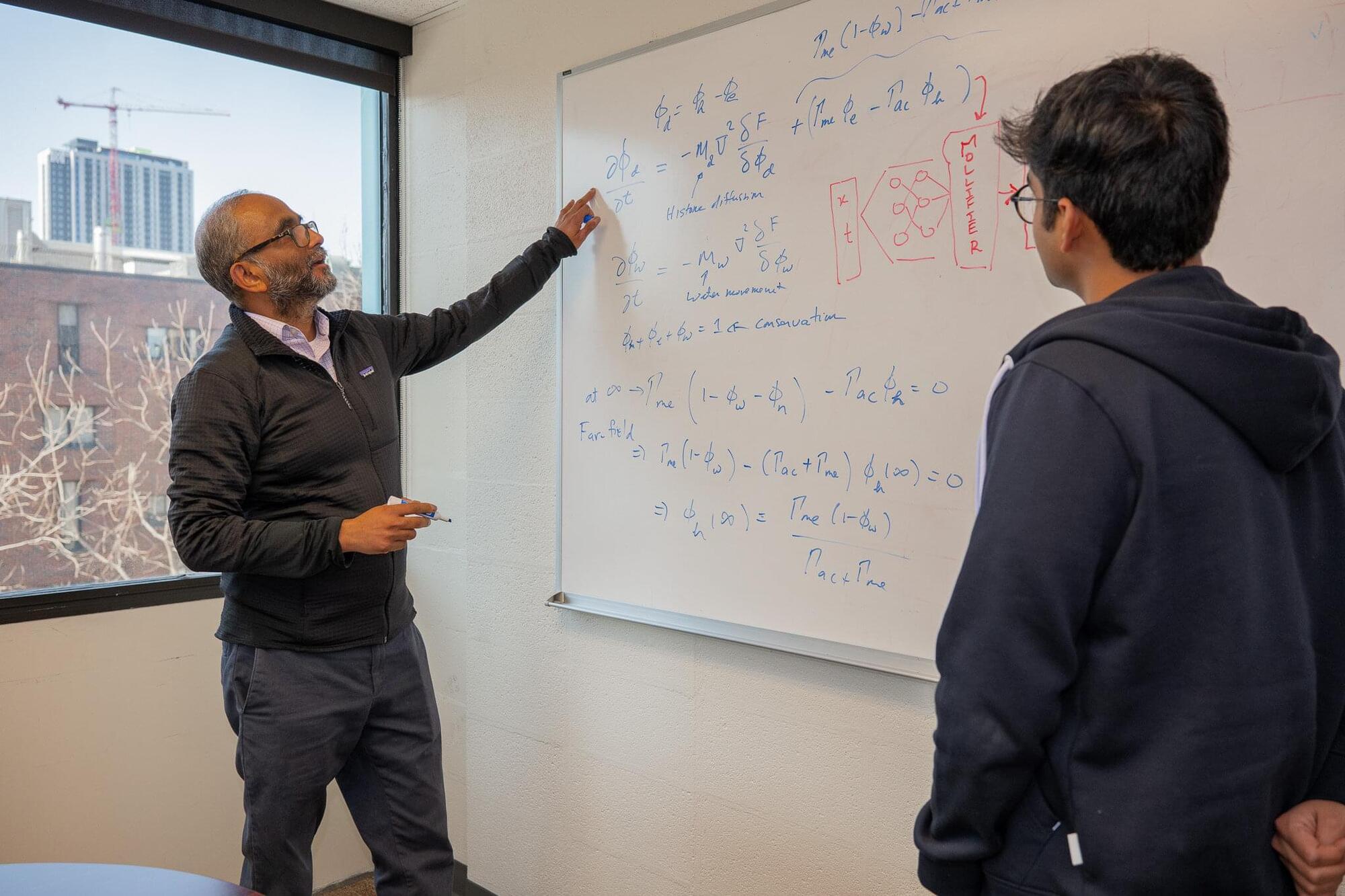

Penn Engineers have developed a new way to use AI to solve inverse partial differential equations (PDEs), a particularly challenging class of mathematical problems with broad implications for understanding the natural world.

The advance, which the researchers call “Mollifier Layers,” could benefit fields as varied as genetics and weather forecasting, because inverse PDEs help scientists work backward from observable patterns to infer the hidden dynamics that produced them.

“Solving an inverse problem is like looking at ripples in a pond and working backward to figure out where the pebble fell,” says Vivek Shenoy, Eduardo D. Glandt President’s Distinguished Professor in Materials Science and Engineering (MSE) and senior author of a study published in Transactions on Machine Learning Research (TMLR), which will be presented at the Conference on Neural Information Processing Systems (NeurIPS 2026). “You can see the effects clearly, but the real challenge is inferring the hidden cause.”

The concept of spacetime, first described in Einstein’s theory of general relativity, has since been widely studied by many physicists worldwide. Spacetime is described mathematically as a four-dimensional (4D) continuum in which physical events occur, which merges three-dimensional (3D) space, with one-dimensional (1D) time.

This 4D continuum is known to continuously evolve following complex and intricate patterns that are governed by Einstein’s field equations; mathematical equations that describe how matter and energy shape spacetime. While various past theoretical studies explored the evolution of spacetime, identifying patterns that persist during its evolution has proved challenging so far.

Researchers at Adolfo Ibáñez University in Chile and Columbia University set out to explore the evolution of spacetime using ideas rooted in nonlinear electrodynamics, an area of physics that studies the behavior of electric and magnetic fields in complex materials.

Shape of the universe and Cosmological Constant.

🚨 The Biggest Problem in Physics (Cosmological Constant) https://lnkd.in/gt7tEpJw ❓ Problem: Why is the Universe accelerating… and why is the value so unbelievably small? Observations (supernovae, CMB, BAO) show: 👉 The expansion is accelerating 👉 This requires a cosmological constant Λ From Einstein’s equation: Λ = 8πG ρ_Λ 😳 But here’s the crisis: Quantum physics predicts vacuum energy: ρ_vac ≈ M_Pl⁴ But observations give: ρ_Λ ≈ 10⁻¹²⁰ M_Pl⁴ 💥 That’s a mismatch of 120 orders of magnitude This is called the cosmological constant problem 🧠 Standard thinking fails because: We assume: 👉 Energy fills space uniformly 👉 Λ comes from summing quantum fluctuations ρ_vac = (1/V) Σ (½ ℏωₖ) But this diverges → way too large ❌ 💡 A different perspective (EWOG insight): Instead of asking: 👉 “What is the energy of empty space?” Ask: 👉 “What is the geometry of the Universe?

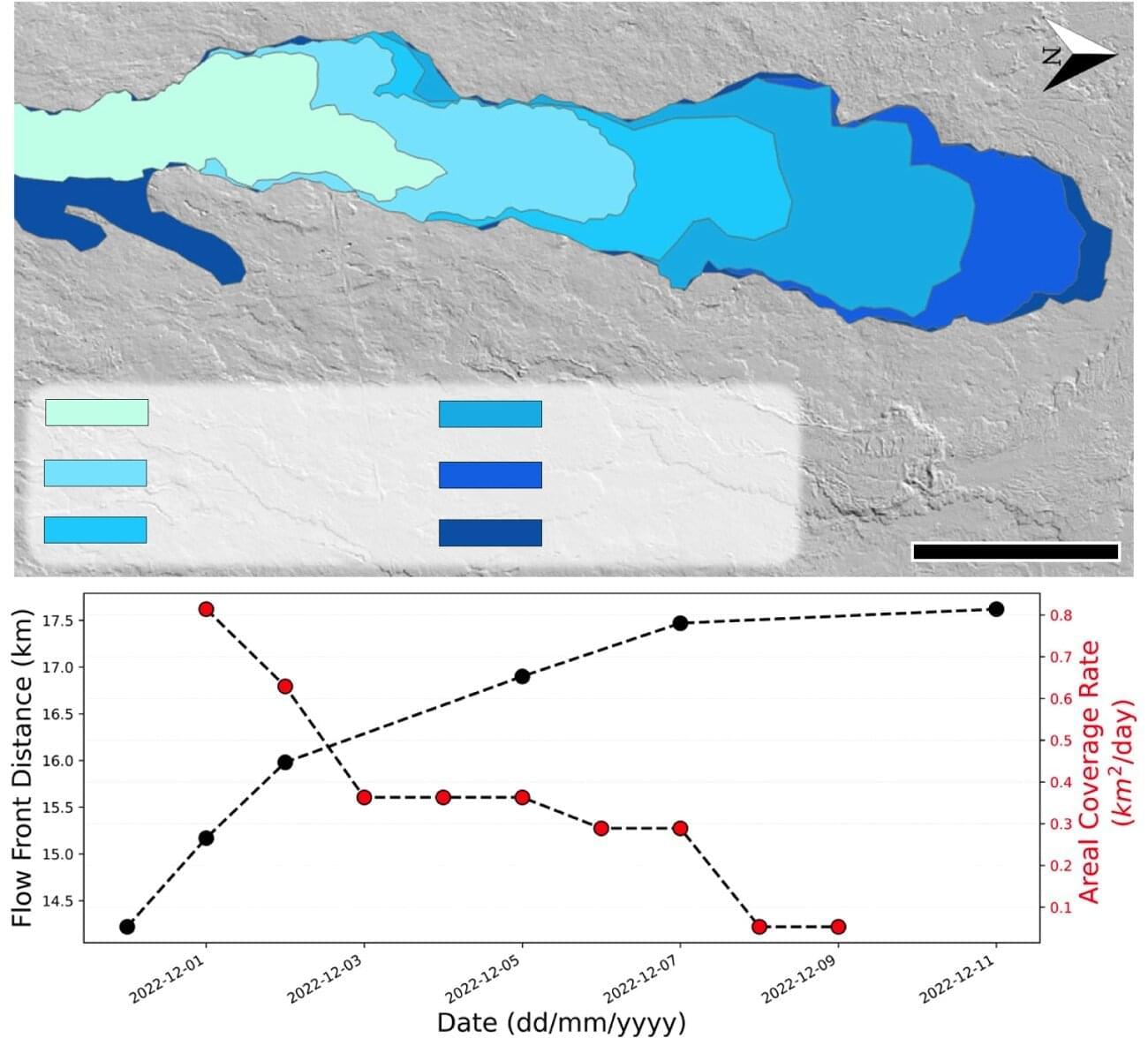

When Mauna Loa erupted in 2022, the largest lava flow headed on a path headed directly toward Daniel K. Inouye State Highway 200, also known as Saddle Road, a critical route that carries many residents from their homes on one side to their jobs on the other.

No one could accurately predict whether the lava would continue to flow and eventually block the highway, or stop short, sparing the road.

However, when the volcano next erupts scientists will be better able to monitor the eruption in real time and make more accurate predictions about where the lava will flow and when the volcano might erupt. These advances are thanks to the availability of satellite data from public and private sources as well as machine learning algorithms developed at Pitt with help from a colleague in Italy, as highlighted in a recent publication in the Journal of Volcanology and Geothermal Research.