Called Evo, the AI was inspired by the large language models, or LLMs, underlying popular chatbots such as OpenAI’s ChatGPT and Anthropic’s Claude. These models have taken the world by storm for their prowess at generating human-like responses. From simple tasks, such as defining an obtuse word, to summarizing scientific papers or spewing verses fit for a rap battle, LLMs have entered our everyday lives.

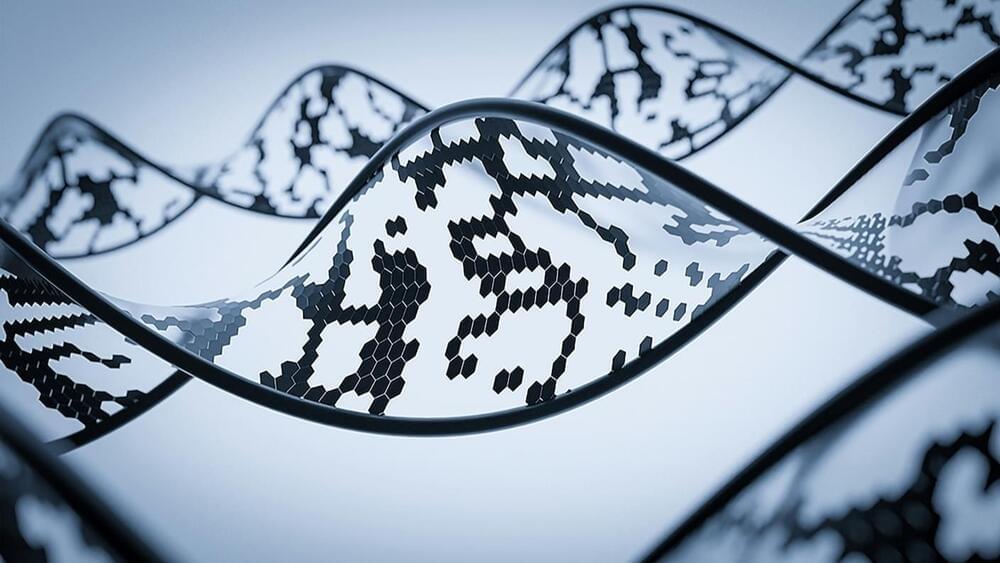

If LLMs can master written languages—could they do the same for the language of life?

This month, a team from Stanford University and the Arc Institute put the theory to the test. Rather than training Evo on content scraped from the internet, they trained the AI on nearly three million genomes—amounting to billions of lines of genetic code—from various microbes and bacteria-infecting viruses.

Leave a reply